Training Efficient Controllers via Analytic Policy Gradient

Abstract

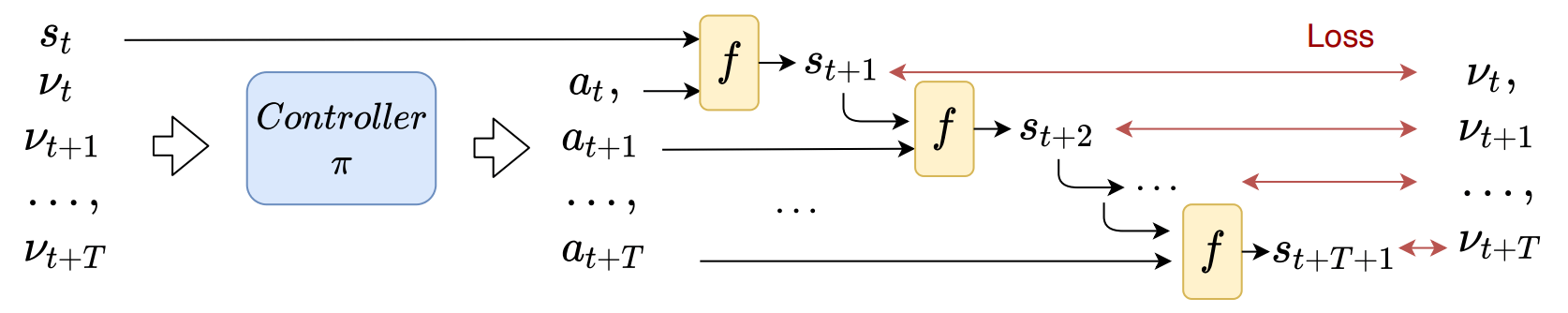

Control design for robotic systems is complex and often requires solving an optimization to follow a trajectory accurately. Online optimization approaches like Model Predictive Control (MPC) achieve great tracking performance but require high computing power. Conversely, learning-based offline optimization approaches, such as Reinforcement Learning (RL), allow fast execution on the robot but hardly match the accuracy of MPC. We propose an Analytic Policy Gradient (APG) method to tackle this problem. APG exploits the availability of differentiable simulators by training a controller offline with gradient descent on the tracking error. We address training instabilities through curriculum learning and experiment on a controls benchmark (CartPole) and two common aerial robots, a quadrotor and a fixed-wing drone. Our proposed method outperforms both model-based and model-free RL methods in terms of tracking error while achieving similar performance to MPC with more than an order of magnitude less computation time.

Resources

arXiv: 2209.13052

Citation

@inproceedings{wiedemann2023apg,

title = {Training Efficient Controllers via Analytic Policy Gradient},

author = {Wiedemann, Nina and W{\"{u}}est, Valentin and Loquercio, Antonio and M{\"{u}}ller, Matthias and Floreano, Dario and Scaramuzza, Davide},

booktitle = {IEEE International Conference on Robotics and Automation (ICRA)},

year = {2023}

}