CLNeRF: Continual Learning Meets NeRF

Abstract

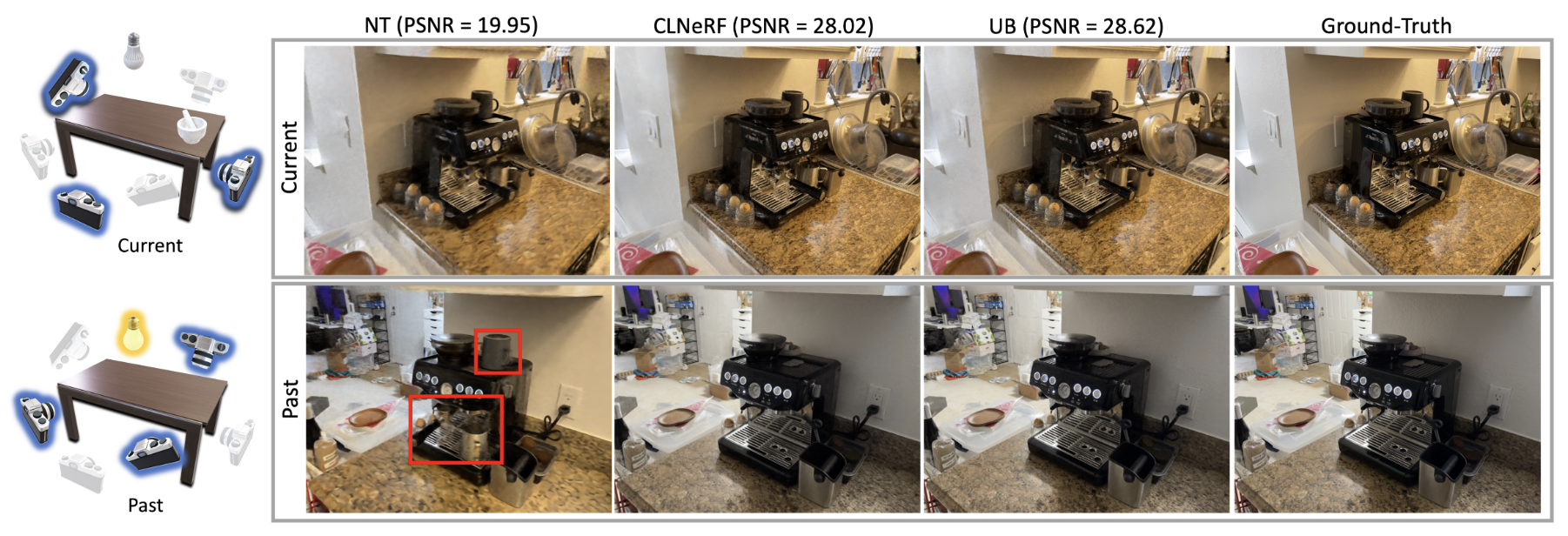

Novel view synthesis aims to render unseen views given a set of calibrated images. In practical applications, the coverage, appearance or geometry of the scene may change over time, with new images continuously being captured. Efficiently incorporating such continuous change is an open challenge. We propose CLNeRF, which introduces continual learning (CL) to Neural Radiance Fields (NeRFs). CLNeRF combines generative replay and the Instant Neural Graphics Primitives (NGP) architecture to effectively prevent catastrophic forgetting and efficiently update the model when new data arrives. We also add trainable appearance and geometry embeddings to NGP, allowing a single compact model to handle complex scene changes. Without the need to store historical images, CLNeRF trained sequentially over multiple scans of a changing scene performs on-par with the upper bound model trained on all scans at once.

Resources

arXiv: 2308.14816

Video

Citation

@inproceedings{cai2023clnerf,

title = {{CLNeRF}: Continual Learning Meets {NeRF}},

author = {Cai, Zhipeng and M{\"{u}}ller, Matthias},

booktitle = {IEEE/CVF International Conference on Computer Vision (ICCV)},

year = {2023}

}