E2PNet: Event to Point Cloud Registration with Spatio-Temporal Representation Learning

Abstract

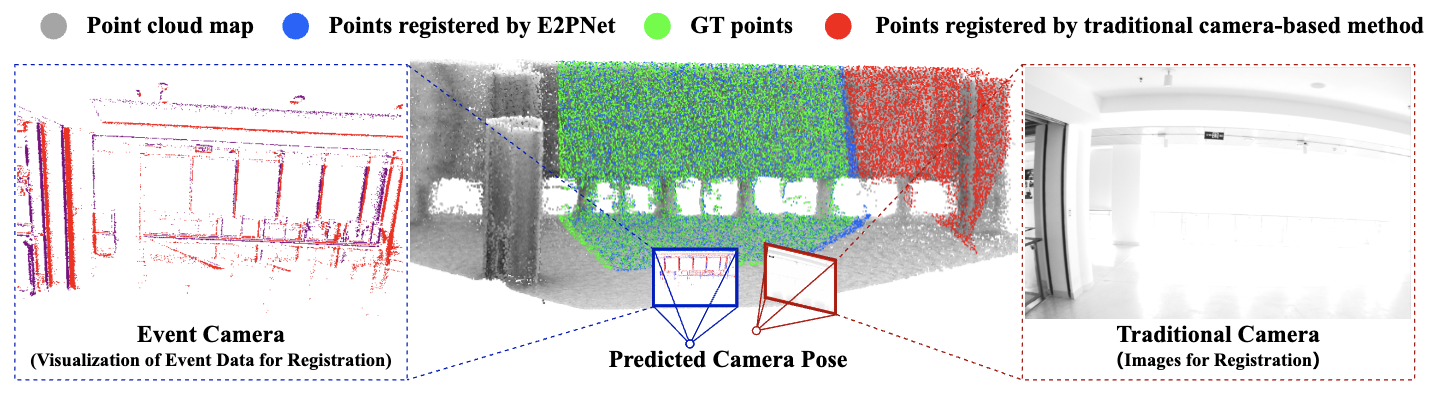

Event cameras have emerged as a promising vision sensor due to their unparalleled temporal resolution and dynamic range. While registration of 2D RGB images to 3D point clouds is a long-standing problem, no prior work studies 2D-3D registration for event cameras. We propose E2PNet, the first learning-based method for event-to-point cloud registration. The core of E2PNet is a novel feature representation network called Event-Points-to-Tensor (EP2T), which encodes event data into a 2D grid-shaped feature tensor. Unlike standard 3D learning architectures that treat all dimensions of point clouds equally, the novel sampling and information aggregation modules in EP2T are designed to handle the inhomogeneity of the spatial and temporal dimensions. Experiments on the MVSEC and VECtor datasets demonstrate the superiority of E2PNet over hand-crafted and other learning-based methods. Compared to RGB-based registration, E2PNet is more robust to extreme illumination or fast motion due to the use of event data.

Resources

arXiv: 2311.18433

Citation

@inproceedings{lin2023e2pnet,

title = {{E2PNet}: Event to Point Cloud Registration with Spatio-Temporal Representation Learning},

author = {Lin, Xiuhong and Qiu, Changjie and Cai, Zhipeng and Shen, Siqi and Zang, Yu and Liu, Weiquan and Bian, Xuesheng and M{\"{u}}ller, Matthias and Wang, Cheng},

booktitle = {Advances in Neural Information Processing Systems (NeurIPS)},

year = {2023}

}