Training Graph Neural Networks with 1000 Layers

Abstract

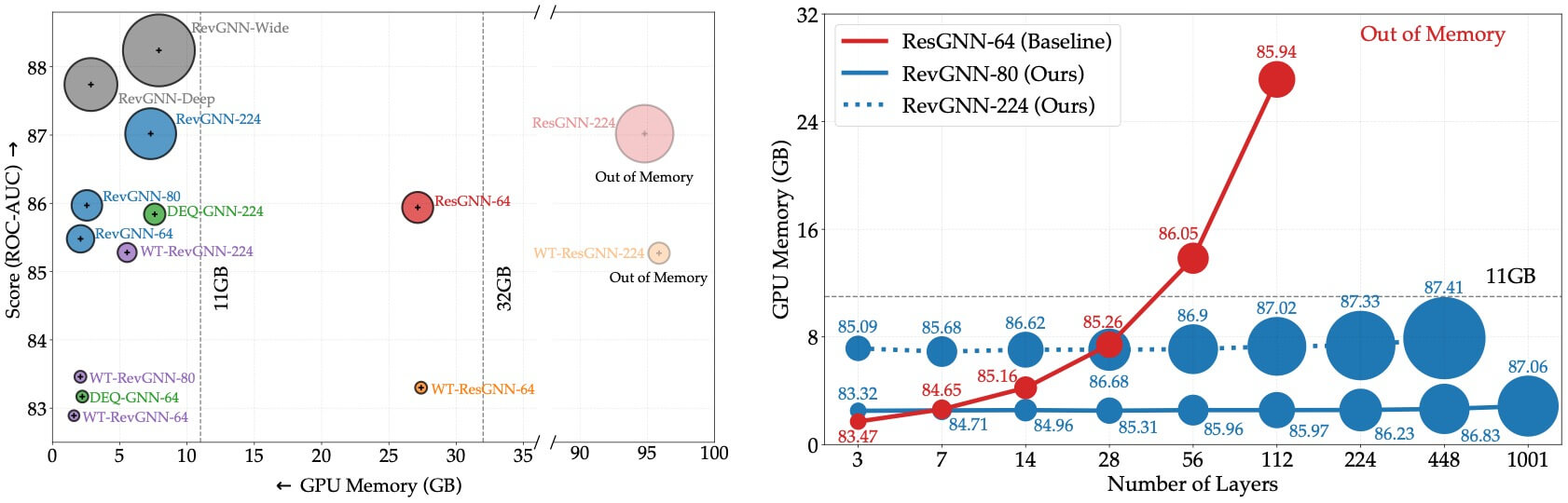

Deep graph neural networks (GNNs) have achieved excellent results on various tasks on large graph datasets. However, memory complexity has become a major obstacle when training deep GNNs for practical applications. In this work, we study reversible connections, group convolutions, weight tying, and equilibrium models to advance the memory and parameter efficiency of GNNs. We find that reversible connections in combination with deep network architectures enable the training of overparameterized GNNs that significantly outperform existing methods on multiple datasets. Our models RevGNN-Deep (1001 layers) and RevGNN-Wide (448 layers) were both trained on a single commodity GPU and achieve an ROC-AUC of 87.74 and 88.24 on the ogbn-proteins dataset. RevGNN-Deep is the deepest GNN in the literature by one order of magnitude.

Resources

arXiv: 2106.07476

Citation

@inproceedings{li2021gnn1000,

title = {Training Graph Neural Networks with 1000 Layers},

author = {Li, Guohao and M{\"{u}}ller, Matthias and Ghanem, Bernard and Koltun, Vladlen},

booktitle = {International Conference on Machine Learning (ICML)},

year = {2021}

}