LDM3D: Latent Diffusion Model for 3D

Abstract

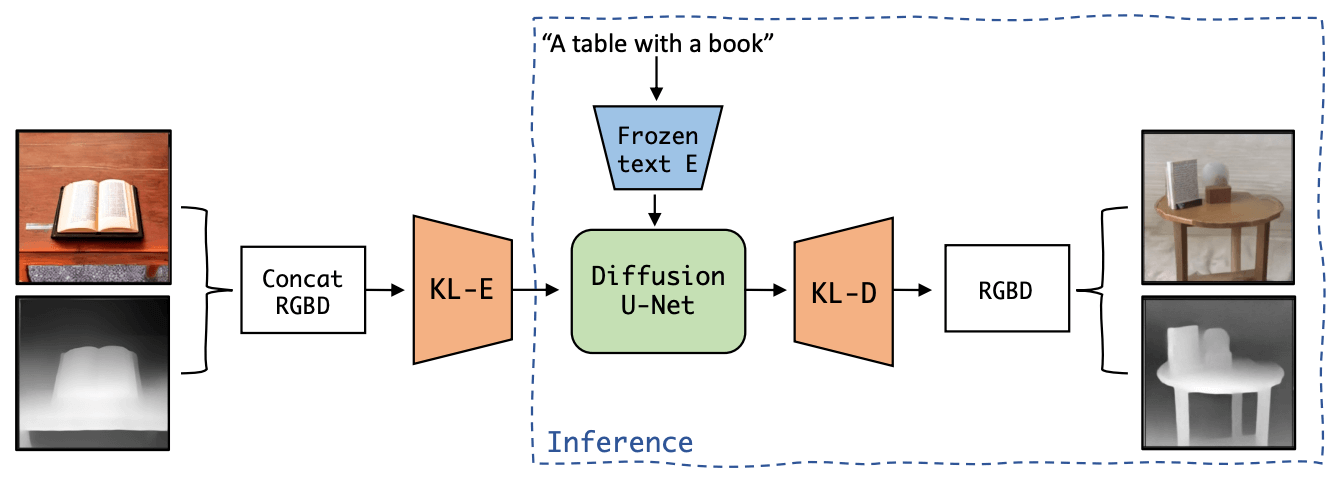

This research paper proposes a Latent Diffusion Model for 3D (LDM3D) that generates both image and depth map data from a given text prompt, allowing users to generate RGBD images from text prompts. The LDM3D model is fine-tuned on a dataset of tuples containing an RGB image, depth map and caption, and validated through extensive experiments. We also develop an application called DepthFusion, which uses the generated RGB images and depth maps to create immersive and interactive 360-degree-view experiences using TouchDesigner. This technology has the potential to transform a wide range of industries, from entertainment and gaming to architecture and design.

Resources

arXiv: 2305.10853

Video

Citation

@inproceedings{stan2023ldm3d,

title = {{LDM3D}: Latent Diffusion Model for {3D}},

author = {Stan, Gabriela Ben Melech and Wofk, Diana and Fox, Scottie and Redden, Alex and Saxton, Will and Yu, Jean and Aflalo, Estelle and Tseng, Shao-Yen and Nonato, Fabio and M{\"{u}}ller, Matthias and Lal, Vasudev},

booktitle = {CVPR Workshops},

year = {2023}

}

Copied!