L-MAGIC: Language Model Assisted Generation of Images with Coherence

Abstract

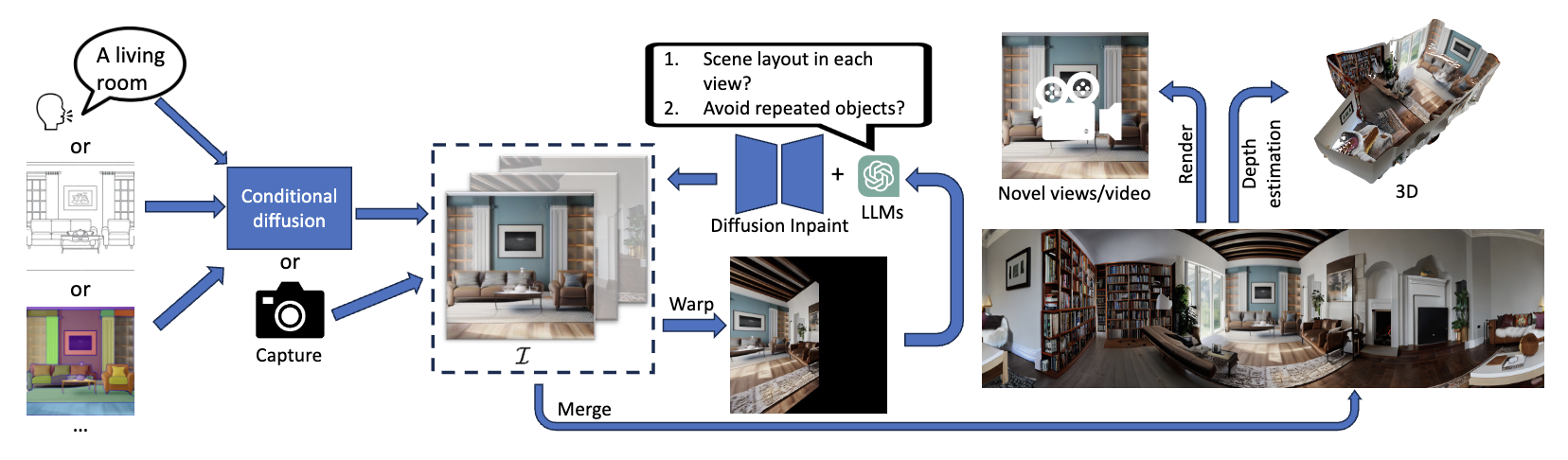

In the current era of generative AI breakthroughs, generating panoramic scenes from a single input image remains a key challenge. Most existing methods use diffusion-based iterative or simultaneous multi-view inpainting, but the lack of global scene layout priors leads to subpar outputs with duplicated objects or requires time-consuming human text inputs for each view. We propose L-MAGIC, a novel method leveraging large language models for guidance while diffusing multiple coherent views of 360-degree panoramic scenes. L-MAGIC harnesses pre-trained diffusion and language models without fine-tuning, ensuring zero-shot performance. The output quality is further enhanced by super-resolution and multi-view fusion techniques. Extensive experiments demonstrate that the resulting panoramic scenes feature better scene layouts and perspective view rendering quality compared to related works, with >70% preference in human evaluations. Combined with conditional diffusion models, L-MAGIC can accept various input modalities including text, depth maps, sketches, and colored scripts.

Resources

arXiv: 2406.01867

Video

Citation

@inproceedings{cai2024lmagic,

title = {{L-MAGIC}: Language Model Assisted Generation of Images with Coherence},

author = {Cai, Zhipeng and M{\"{u}}ller, Matthias and others},

booktitle = {IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2024}

}